Excellent website design is highly essential for every business that is looking to become successful online. However, this website might not become visible to people online if it does not rank well on Google search engines. To prevent this from happening, consider applying certain practical SEO principles. These SEO Principles are meant to optimize your content development strategies and are a must for every web designer.

Most excellent web designers think profoundly about avoiding past issues that they and their colleagues have had when dealing with SEO. The following SEO principles are excellent hacks that can help you design high-ranking websites on Google search engines without compromising your style and creativity. You can also read Complete Step by Step Guide to Learning SEO for Dummies

1. Ensure That You Have A Search Engine Friendly Site Navigation System.

It could be a bad strategy to use Flash to help in web navigation if you do not know how to integrate this in a way that would be accessible and friendly to web crawlers. If websites have flash objects on them, they become quite challenging for web crawlers to access. If you need a solution for this, you could adopt unobtrusive JavaScript and CSS tools, which can provide all of the fancy effects you want without minimizing your rankings on Google.

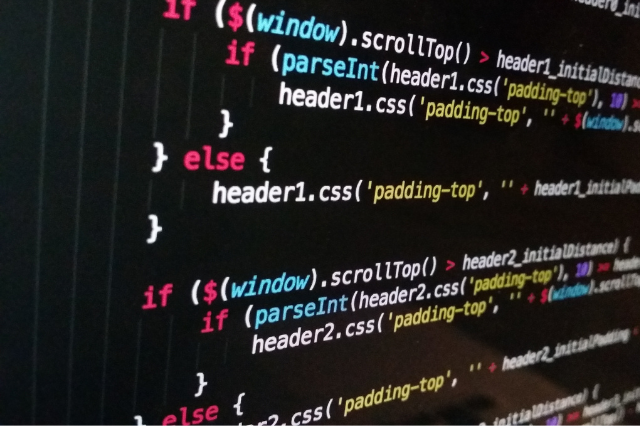

2. Place Scripts Outside Of The HTML Document.

During the coding of your website, ensure that the CSS and JavaScript are externalized. Most search engines will look at your website based on the contents of the HTML document. When you do not externalize your CSS and JavaScript, additional coding lines could be added to the HTML documents. In several scenarios, this will come first before the actual content. This will, in turn, slow down crawling machines when they crawl the website. Search engines prefer to access the contents of a website quickly. That is how they are designed.

3. Insert Contents That Appear Readable To Search Engine Spiders.

If your website’s content is the driving force, it is simply food for search engine crawlers. If you are in the middle of website design, consider providing a neat structure for the content (page headings, links, and paragraphs).

Websites that have minimal content will undoubtedly need help to come up in search engine results. You could avoid this by adequately planning each design stage. For instance, avoid using images as text unless you use the CSS background-image text’s replacement technique.

4. Design Your Web URLs To Become Friendly To Search Engines.

By friendly URLs, we mean the types that can be crawled easily and not query strings. The most excellent URLs involve keywords that have keywords that explain whatever is contained on the page.

For instance, the following are URLs for the website of an HVAC company:

- Hvaceurope.com/services/maintenance/

- Hvaceurope.com/services/home-repairs/

- Hvaceurope.com/services/office-repairs/

The URLs that have been listed above are all great for SEO on the HVAC Europe website. As a comparison, these other URLs are not SEO-friendly:

- Hvaceurope.com/services/repairs-after-purchase

- Hvaceurope.com/hvacservicingforhomesandoffices

- Hvaceurope.com/Hvacservicingforofficesandcompanies.

It would also be best if you watched out for specific CMSs that use numbers that are generated automatically and unique code on the URLs of web pages. An excellent content management system will enable you to customize your website and make the URLs of these websites brief.

5. If There Are Pages You Do Not Want Indexed By Search Engines, Block Them

If you do not want individual pages to be indexed by search engines, Then you should block them. Such pages, like server-side scripts, do not add anything of value to your web content. In particular, the web pages can be pages used to try out your designs during the development of the new website. It would be best if you did not get these web pages exposed to web roots. There could be duplicate content problems with search engines. Also, this could dilute the density of the real content on your website. This would certainly negatively impact the search positions of your website.

The most excellent way you can avoid search engines indexing web pages is by using the robots.txt file. It is among the five available web files that can be used for website improvement.

6. Get Your Web Page Updated With New Contents

If there is a blog on your website, consider placing some recent blog post excerpts on every web page that you have. Search engines like it when the contents of your pages change all the time. This indicates signs of being active and fully functional. Furthermore, search engines crawl your websites more frequently when you have to change content on the website. Also, ensure that you show all the posts to avoid duplicate content problems.

7. Use Unique Web Metadata

It would help if you used different keywords, descriptions, and page titles. Many times, web designers tend to create a web template and need to remember to switch out the web metadata. Then, some other pages will end up using the initial placeholder information. Each page should have its metadata collection. This is just one of the few elements that help search engines get the proper structure of a website.

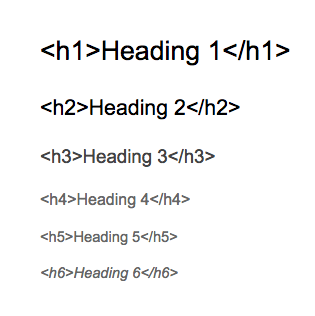

8. Know-How To Make Use Of Heading Tags Properly

You should make fair use of web heading tags when filling out those for your web page. Web heading tags provide search engines with the right information on your HTML document’s structure. Search engines often value these tags more than other texts on your web page (maybe except hyperlinks).

For the web page’s main topic, you could use h1 tag by Sitechecker. It would help if you properly used h2 all the way down to h6 tags. This shows signs of content hierarchy. It is also used to delineate blocks that come from similar content.

9. Use W3C Standards

One thing you need to know about search engines is that they like clean code that has been correctly written. When you use clean codes on a particular website, the site is indexed easily. These clean codes generally indicate how much effort goes into the construction of a particular website. When you follow W3C standards, you are meant to create semantic markups; this is great for web SEO.

So that’s all from this blog. I hope you liked the SEO Principles, and if you want, you can also follow expert SEO tips to increase organic traffic. If you enjoyed this article, please share it with your friends.